We're releasing Z01, 1 million autorouting samples for training vision models on autorouting

Today we’re releasing Z01, 1 million autorouting input and output sample images for training vision models to autoroute 2-layer printed circuits. You can download the dataset on huggingface.

Before we get into the details, huge shout out to Johnathon Selstad who inspired and guided me through the process of creating this dataset, without him this would not have happened!

This dataset was created by running @tscircuit/high-density-a01 on 1 million randomly-created configurations of connection pairs in a 10x10mm rectangle with zero pads, keepouts or other obstacles within the rectangle.

This dataset represents the core problem of autorouting, which is generating viable trace paths that do not cross on the same layer within a dense space. Competing concerns with obstacle avoidance, DRC compliance, impedance matching etc. all are dependent on how you solve the “multi-agent pathfinding” problem at the core of autorouting.

The purpose of this dataset is to enable the development of vision models that can prove the viability of autorouting with vision models, not to build a full autorouting pipeline or production-ready printed circuit boards.

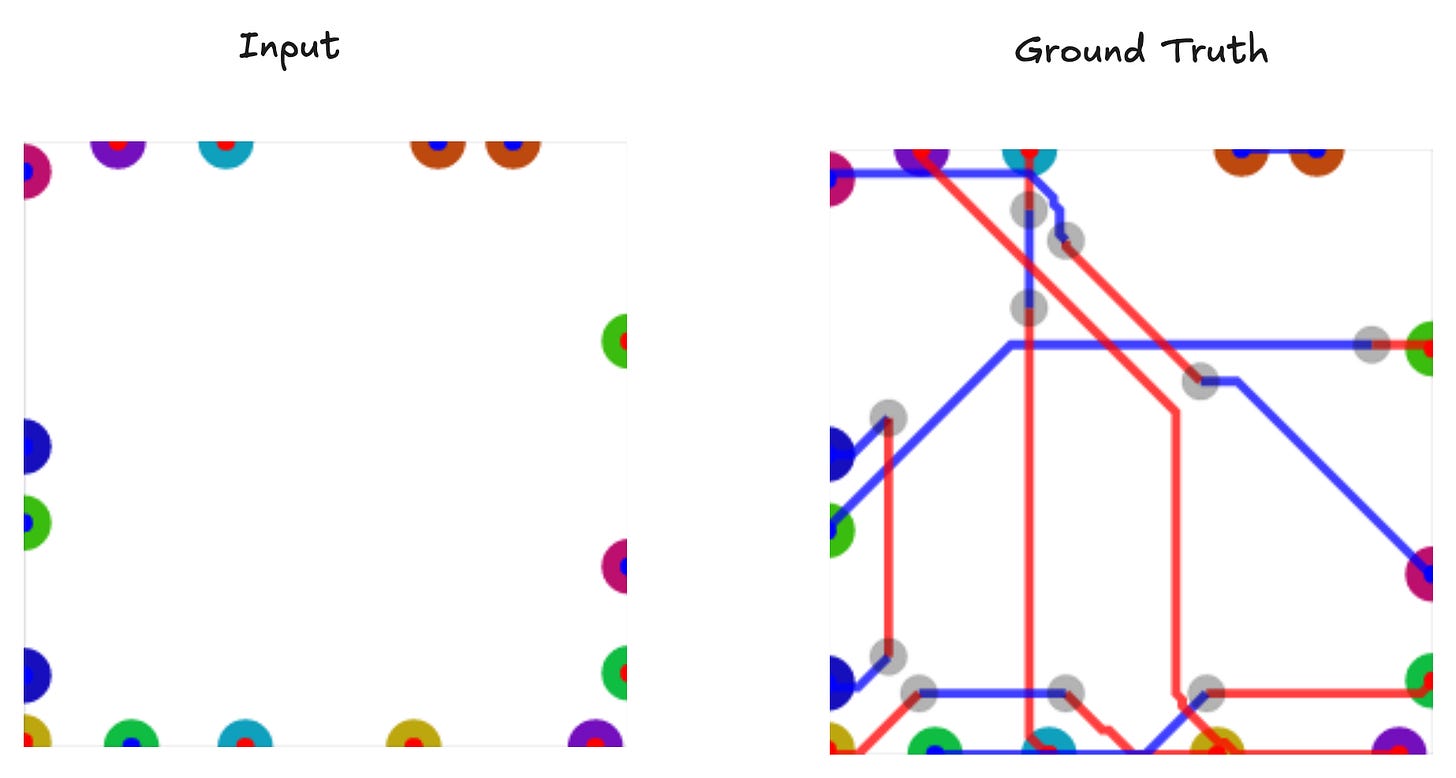

Here is an example of input and output data from the dataset:

The output of this autorouter, after parsing, can be input directly into the HighDensity phase of the tscircuit autorouter, so can be run as part of a larger autorouting pipeline.

This is far from a comprehensive autorouting dataset to cover all the edge cases and functions that are needed for a proper pipeline, but should pave the wave for researchers to determine if vision models are practical for autorouting.